The Data Problem in Medical AI and How Generative Adversarial Network Helped Us

Early detection of lung cancer saves lives. When a pulmonary nodule, a small lump in the lung tissue, is caught early enough, treatment options are far better and survival rates improve significantly. The challenge is that spotting these nodules on a chest MRI scan requires a trained radiologist, a careful eye, and a lot of time. It is not something you can automate easily or rush.

We were exploring whether AI could help with exactly this as part of a proof of concept. The idea was to train a model on labelled MRI scans so it could flag potential nodules automatically, giving radiologists a faster starting point. This was an early-stage investigation, not a production system, and everything described here is still going through internal evaluation before any further steps are considered.

When we sat down with the available data, the first real problem showed up. After going through everything we could access, we had around 1,400 labelled scans in the nodule-positive category. Getting more was not simple. Hospitals cannot just hand over patient scans because there are privacy laws, consent requirements, and legal agreements involved. And even after you have the scans, a radiologist has to manually review and label each one, which is slow and expensive. We were stuck.

The model trained on those 1,400 scans was overfitting, meaning it performed well on scans it had already seen but struggled on new ones. It had memorised the examples rather than actually learning to detect nodules. We needed more data, and we needed it without waiting another six months.

That is where a GAN came in.

A GAN, which stands for Generative Adversarial Network, is a type of AI model that can learn from existing images and generate new synthetic ones that look just as real. As part of this proof of concept, the idea was to use it to create additional MRI scan variations and add them to our training set, not as replacements for real clinical data, but as a way to explore whether this approach could address the data shortage. This article explains how GANs work and how we built one to test this idea.

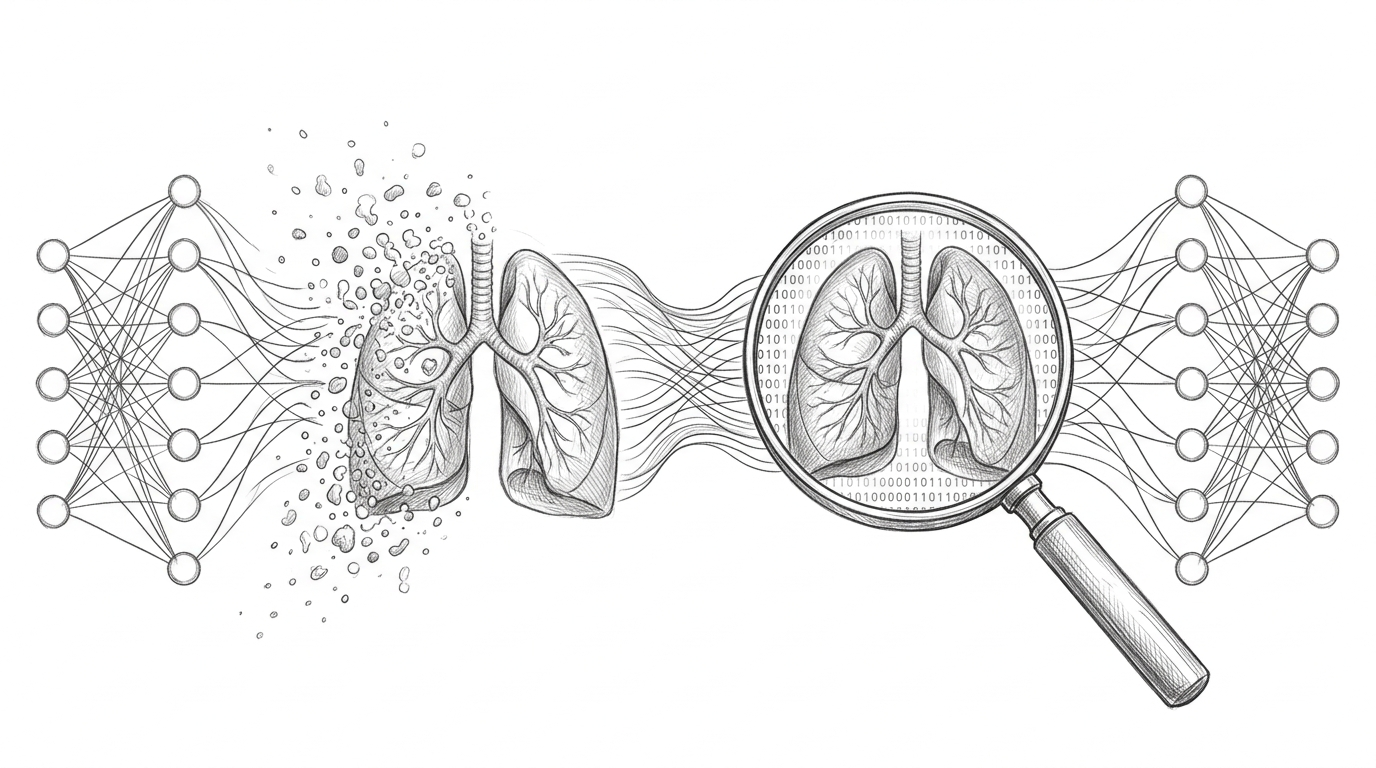

A GAN has two parts inside it, two AI models that work against each other. The first part is called the generator. Its only job is to create fake images. It starts with random noise, basically random numbers, and tries to turn that into something that looks like a real image. The second part is called the discriminator. Its job is to look at an image and decide whether it is real or fake.

These two parts train together at the same time. The generator keeps creating fake images trying to fool the discriminator. The discriminator keeps studying those fakes trying to get better at catching them. Every time the discriminator spots the fake, the generator learns from that and tries to do better next time. Every time the generator fools the discriminator, the discriminator learns from that too. This back-and-forth goes on for thousands of rounds, and over time the generator gets so good that even the discriminator cannot tell the difference between real and fake.

Think of it like a forger and an art inspector. The forger is making fake paintings. The inspector keeps catching him, wrong canvas texture, brushstrokes that do not match the style, varnish applied too evenly. Each time the inspector points out a flaw, the forger improves. After years of this, the forger is so skilled that even expert inspectors cannot find anything wrong. The inspector’s pressure is what made the forger this good. That is exactly how GAN training works.

Here is a high level picture of how the two networks connect and what flows between them.

flowchart LR

A([Random Noise]) --> B[Generator]

B --> C([Fake Image])

D([Real MRI Scan]) --> E{Discriminator}

C --> E

E --> F([Real or Fake?])

F -->|Loss fed back| B

F -->|Loss fed back| E

The type of GAN we used is called a DCGAN, which stands for Deep Convolutional GAN. Convolutional layers are really good at understanding images because they look at small local patterns like edges and textures before building up to larger structures. That makes them a much better fit for image generation than plain networks. The generator took random noise as input and gradually built it up into a 256x256 grayscale image, the same size as our MRI scans. The discriminator took a 256x256 image and gave back one number, its confidence that the image was real.

For the tools, we used PyTorch to build and train the models. We chose it because GAN training has a custom loop where you update the generator and discriminator separately, one after the other, and PyTorch makes that kind of custom control straightforward to write. For loading and preprocessing the scans, we used MONAI, a library built specifically for medical imaging. Medical scans are stored in a special format called DICOM, not a regular image file like JPG or PNG, and MONAI handles all of that natively. It also applies the right kind of preprocessing for clinical data. For example, you can flip or slightly rotate an MRI scan and it is still a valid training example, but you cannot aggressively stretch or colour-shift it the way you might with a regular photo, because that would distort clinically relevant details. We tracked everything using Weights and Biases, which is an experiment tracking tool. During GAN training you are constantly watching the generator loss and discriminator loss, and if one grows too fast compared to the other, training goes wrong. Without proper tracking, debugging a GAN is like flying blind. The whole training run took around 14 hours for 200 rounds of learning, running on NVIDIA A100 GPUs on a cloud platform.

The training loop itself is different from normal AI training. The diagram below shows the two separate update steps that happen in every single round.

sequenceDiagram

participant R as Real Scans

participant G as Generator

participant D as Discriminator

Note over G,D: Step 1 - Train the Discriminator

R->>D: Show real scans (label 0.9)

G->>D: Show fake scans (label 0.1)

D->>D: Compute loss, update weights

Note over G,D: Step 2 - Train the Generator

G->>D: Send new fake scans

D->>G: Return confidence score

G->>G: Compute loss, update weights

In each round, you first train the discriminator by showing it a mix of real scans and freshly generated fake scans and letting it practice telling them apart. Then separately, you train the generator by generating new fakes, passing them through the discriminator, and updating the generator based on how well it fooled it. The important thing is that you update them separately. If you try to update both at the same time the signals mix up and the whole thing collapses. We also used a small trick called label smoothing, where instead of telling the discriminator that a real image scores exactly 1.0 and a fake scores exactly 0.0, we used 0.9 and 0.1. This stops the discriminator from becoming overconfident too early, which would make the generator’s training signal useless.

Around epoch 40, about one-fifth of the way through training, something went wrong. The losses looked fine on the graph. The discriminator could no longer tell real from fake, which should mean the generator is doing a great job. But when we actually looked at the generated images, they were all nearly identical. Different noise inputs were producing the same scan over and over again.

This is called mode collapse. The generator had found one type of output that consistently fooled the discriminator and just kept producing that instead of exploring the full variety of what a real scan looks like. It took the easy way out. We fixed it with two changes. The first was experience replay, where we kept a history of previously generated images and randomly mixed them into the discriminator’s training batches. This stops the discriminator from only learning to catch the very latest tricks the generator is using, which in turn forces the generator to stay diverse. The second was spectral normalisation, a technique that stops the discriminator from changing its outputs too dramatically in response to small changes in input. Without it, the discriminator sends the generator confusing signals. After both changes, by around epoch 60, the generator started producing genuinely varied scans again.

Once training was done, we could not just look at a few images and say it looked fine. As part of the proof of concept evaluation, we took 200 images, 100 real scans and 100 synthetic ones, shuffled them, and gave them to two radiologists without telling them which was which. They were asked to classify each image as real or fake. They misclassified 38% of the synthetic scans as real. That is close to 50/50, which is a promising early signal. It suggests the fakes were realistic enough to pass an initial visual review by clinical experts.

To understand whether the synthetic data actually helped, we trained three versions of the detection model, one on real data only, one on real data plus basic augmentation like flips and rotations, and one on real data plus GAN-generated synthetic scans. The GAN-augmented model showed a 6.4 percentage point improvement in sensitivity compared to the real-data-only baseline. In plain terms, it caught more nodules without increasing false alarms. These are encouraging proof of concept numbers, but they are not clinical validation. The model has not been tested on independent datasets across different hospitals, scanner types, or patient demographics, and that work would need to happen before any real conclusions can be drawn.

The proof of concept is currently under internal review. The next steps being evaluated include a broader audit of the synthetic data pipeline, testing on a more diverse dataset, and understanding what regulatory and clinical governance requirements would apply if this approach were to move further. Nothing from this experiment is being used in any clinical setting.

GANs are not a free lunch. Synthetic data does not replace real data, and the numbers from a proof of concept should never be mistaken for production readiness. A GAN can only generate what it has seen, so if the training scans skewed towards certain patient types or nodule shapes, the GAN reflects that gap rather than fills it. There is also a memorisation risk. A GAN trained too aggressively on a small dataset can start reproducing its training examples. We checked for this using similarity scores across the training set, and nothing raised a flag, but this kind of check would need to be far more rigorous in any formal audit.

What this proof of concept showed is that the idea is worth investigating further. GANs can generate convincing medical image variations, and that has real potential for addressing data scarcity in clinical AI. Whether it holds up under proper scrutiny is the question the evaluation is trying to answer.